Grid computing Technology

Introduction

The research seminar report is focused on an emergent technology called Grid Computing. Grid technology uses divide-and-conquer tactics to distribute computationally intensive tasks among any number of separate computers for parallel processing. It allows unused CPU capacity - including, in some cases, the downtime between a user's keystrokes - to be used in solving large computational problems. The report is divided into three parts, which are what is Grid, what is rationale of Grid.And then discuss the present and the future of Grid Technology.

What is Grid computing technology?

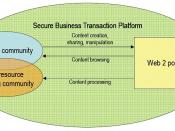

Grid computing is an emerging computing model that provides the ability to perform higher throughput computing by taking advantage of many networked computers to model a virtual computer architecture that is able to distribute process execution across a parallel infrastructure. Grids use the resources of many separate computers connected by a network (usually the Internet) to solve large-scale computation problems.

Grids provide the ability to perform computations on large data sets, by breaking them down into many smaller ones, or provide the ability to perform many more computations at once than would be possible on a single computer, by modeling a parallel division of labor between processes.

(Wikipedia, 2006,http://en.wikipedia.org/wiki/Grid_computing)

Sometimes it's easier to start defining Grid computing by telling you what it isn't. For instance:

It's not artificial intelligence,

It's not some kind of advanced networking technology.

It's also not some kind of science-fictional panacea to cure all of our technology ailments.

(http://www-128.ibm.com/developerworks/grid/)

If you can think of the Internet as a network of communication, then Grid computing is a network of computation: tools and protocols for coordinated resource sharing and problem solving among pooled assets. These pooled assets are known as virtual organizations. They can be distributed across the globe; they're heterogeneous (some PCs, some...

Nice man!

I loved it. :)

1 out of 1 people found this comment useful.