Webster's Dictionary defines "computer" as any programmable electronic device that can store, retrieve, and process data. The basic idea of computing develops in the 1200's when a Moslem cleric proposes solving problems with a series of written procedures.

As early as the 1640's mechanical calculators are manufactured for sale. Records exist of earlier machines, but Blaise Pascal invents the first commercial calculator, a hand powered adding machine. Although attempts to multiply mechanically were made by Gottfried Liebnitz in the 1670s the first true multiplying calculator appears in Germany shortly before the American Revolution.

In 1801 a Frenchman, Joseph-Marie Jacquard builds a loom that weaves by reading punched holes stored on small sheets of hardwood. These plates are then inserted into the loom which reads (retrieves) the pattern and creates (process) the weave. Powered by water, this "machine" came 140 years before the development of the modern computer.

Shortly after the first mass-produced calculator (1820), Charles Babbage begins his lifelong quest for a programmable machine.

Although Babbage was a poor communicator and record-keeper, his difference engine is sufficiently developed by 1842 that Ada Lovelace uses it to mechanically translate a short written work. She is generally regarded as the first programmer. Twelve years later George Boole, while professor of Mathematics at Cork University, writes An Investigation of the Laws of Thought (1854), and is generally recognized as the father of computer science.

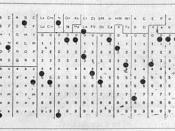

The 1890 census is tabulated on punch cards similar to the ones used 90 years earlier to create weaves. Developed by Herman Hollerith of MIT, the system uses electric power (non-mechanical). The Hollerith Tabulating Company is a forerunner of today's IBM.

Just prior to the introduction of Hollerith's machine the first printing calculator is introduced. In 1892 William Burroughs, a sickly ex-teller, introduces a commercially successful printing calculator.