Regression Analysis � PAGE �2�

Regression Analysis

August 18, 2008

Introduction

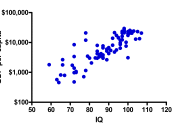

Regression analysis and correlation analysis are two methods widely used in statistics to investigate the associations of variables. Regression and correlation gauge the extent of a connection among variables in two methods that are similar, yet different. In the case of regression analysis, Y is considered as a single dependent variable and a working attribute of one or more variables that would be considered independent, such as X1, X2, X3, and so on.

In the case of regression analysis the presumption is that the values of both the independent and dependent variables are acquired in a random fashion, devoid of inaccuracies. Regression analysis also has other forms that give the assumption that values of the dependent variable are normally distributed about some mean for any given value of the independent variable. In regard to observing the data, the application of this process to independent and dependent variables generates an equation that most likely describes the functional relationship between them (Doane and Seward, 2007).

The degree of relation between two or more variables is ascertained using correlation analysis. The assumption in methods of correlation analysis is that differences in each of the variables are random, and the variables follow normal distribution for any set of values taken under a given set of conditions. The correlation coefficient, or r, is created when correlation analysis is used on the independent and dependent variables. The representation of the proportion of the variation in the dependent variable associated with the regression of an independent variable is typified by the square of this statistical parameter, also known as the coefficient of determination, or r2 (Doane and Seward, 2007).

Research Issue

The regression analysis will involve the extent of the relationship of the variables of...