Machines today can simulate practically anything, whether it is a program to forecast weather days ahead or have human cognitive capacities to understand any given situation and return the optimal solution. Machines are becoming more technological advanced as we use them daily, but can they ever be strongly artificially intelligent? Can a machine have cognitive states, therefore becoming sapient, and can think like humans do? John Searle, a philosophy professor at UC Berkeley, opposes that no machine can have a mind, a strong AI (Artificial Intelligence).

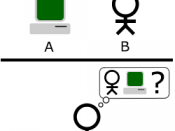

What is the distinction between a strong to a weak AI? According to Searle, a strong AI is "a computer that is merely not a tool in the study of the mind; rather, the appropriately programmed computer really is a mind, in the sense that computers given the right programs can literally said to understand and have other cognitive states." A strong AI can be defined as a claim that some forms of artificial intelligence can truly reason and solve problems and as a result is possible for machines to become self-aware that it may exhibit human-like thought processes.

In contrast, weak AI refers to the use of software to study the behavioristic and pragmatic view of intelligence. In weak AI, the software is not claimed as being intelligent, but being a tool people use to assess hypotheses regarding the nature of intelligence. An example can be a calculation of a mathematical problem or finding the average lifespan of a certain species. The distinction of strong from weak AI is that in strong AI the computer becomes a conscious mind, not simply an intelligent, problem-solving device. However, it does not mean that devices that demonstrate weak AI are necessarily weaker or less sufficient at solving problems than devices that demonstrate strong AI.

Excellent essay

This essay presents many valid points and I have to say I agree with the fact that machines cannot possess a strong AI. Still movies we have today still scare me in the fact that what if someday they did. Anyway overall a very informative essay and I would like to see more like it.

1 out of 1 people found this comment useful.